Yes, you read it right. Your ads often get placed next to kids’ content.

And the concerning part? Your brand safety filters don’t identify these placements as unsafe, because technically they aren’t. What they are, however, are brand-unsuitable placements.

It is a systemic gap in how traditional brand safety tools classify content and demographic targeting that affects more campaigns than most advertisers realize.

In this blog, we break down exactly how this happens through real examples, why your current filters and targeting settings may not be enough, and what it actually takes to ensure your ads are brand safe and brand suitable, in every sense of the word.

Brand Safety & Brand Suitability: They Are Not The Same Thing

Before we get into how this happens, it’s important to understand two terms that are often used interchangeably but mean very different.

Brand Safety

Brand safety is about making sure your ad doesn’t appear next to content that is harmful, offensive, or controversial — violence, hate speech, adult content, or extremist material. Most advertisers today are aware of brand safety and have some level of filtering in place for it.

Brand Suitability

This goes one level deeper. Brand suitability is not just about avoiding inappropriate placements. It’s about making sure the content environment your ad appears in actually fits in the environment, context, and sentiment of your brand, your product, and the audience you’re trying to reach.

Here is a simple example. A children’s cartoon is not unsafe content. It carries no violence, no hate speech, nothing harmful. But if you are a premium financial brand targeting urban professionals between 30 and 50, running your ad before that cartoon is a suitability failure, not a safety failure.

The content is perfectly fine. The contextual ad targeting is completely wrong. This is the gap most advertisers are not solving for.

Why Do Basic Brand Safety Tools Fail to Identify the Brand Suitability Issues?

Here are the two major reasons why your ads keep appearing besides irrelevant content ad placements.

The Content Identification Problem

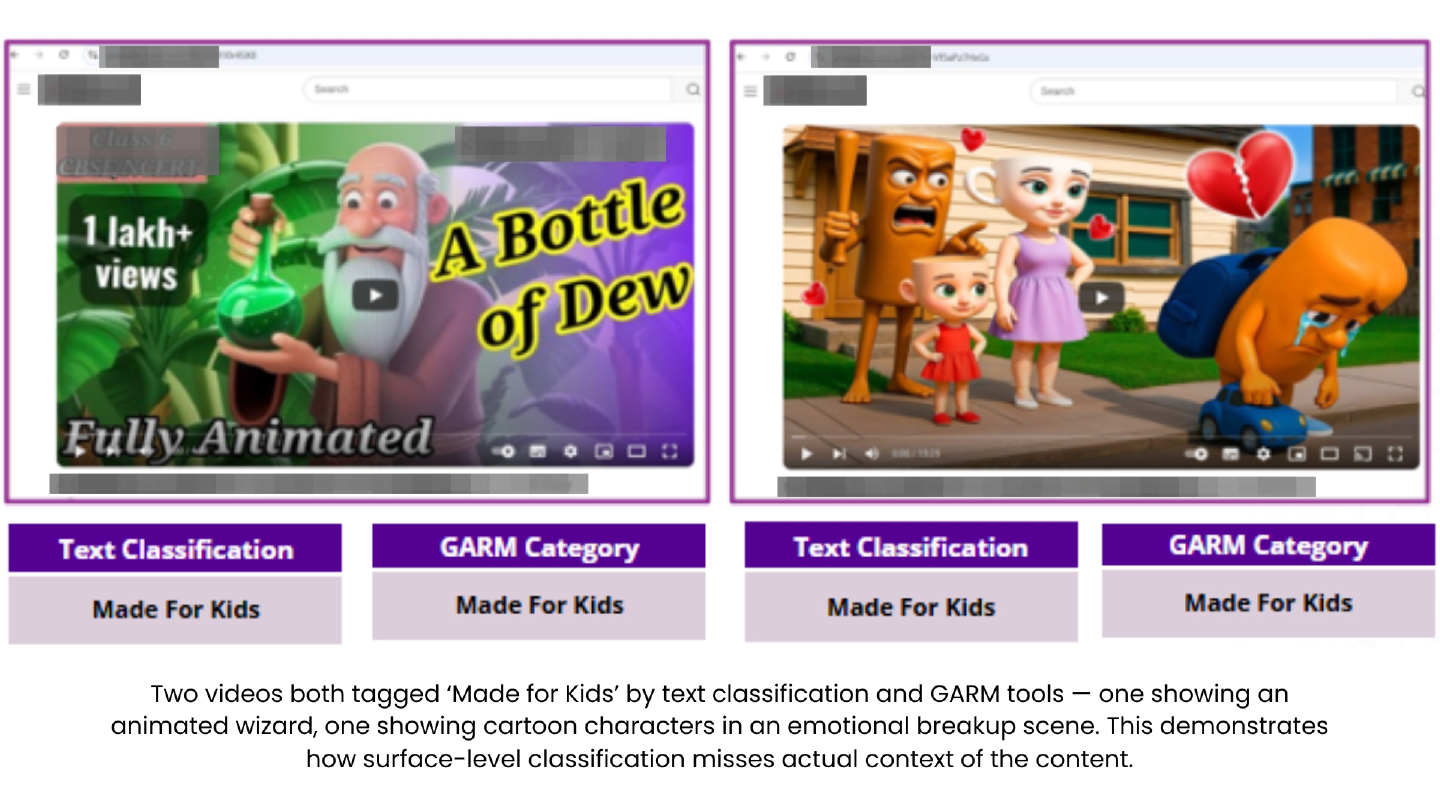

Platforms and traditional brand safety tool do their classification work by reading what is written about a video – the title, the description, the tags, and other metadata. They do not actually watch the video.

What’s written about a piece of content and what’s actually inside it can be two entirely different things. A video can be tagged as a cartoon and correctly land in a kids’ content category, but still carry themes, visuals, or emotional tones that are completely at odds with what that label suggests.

The Audience Targeting Problem

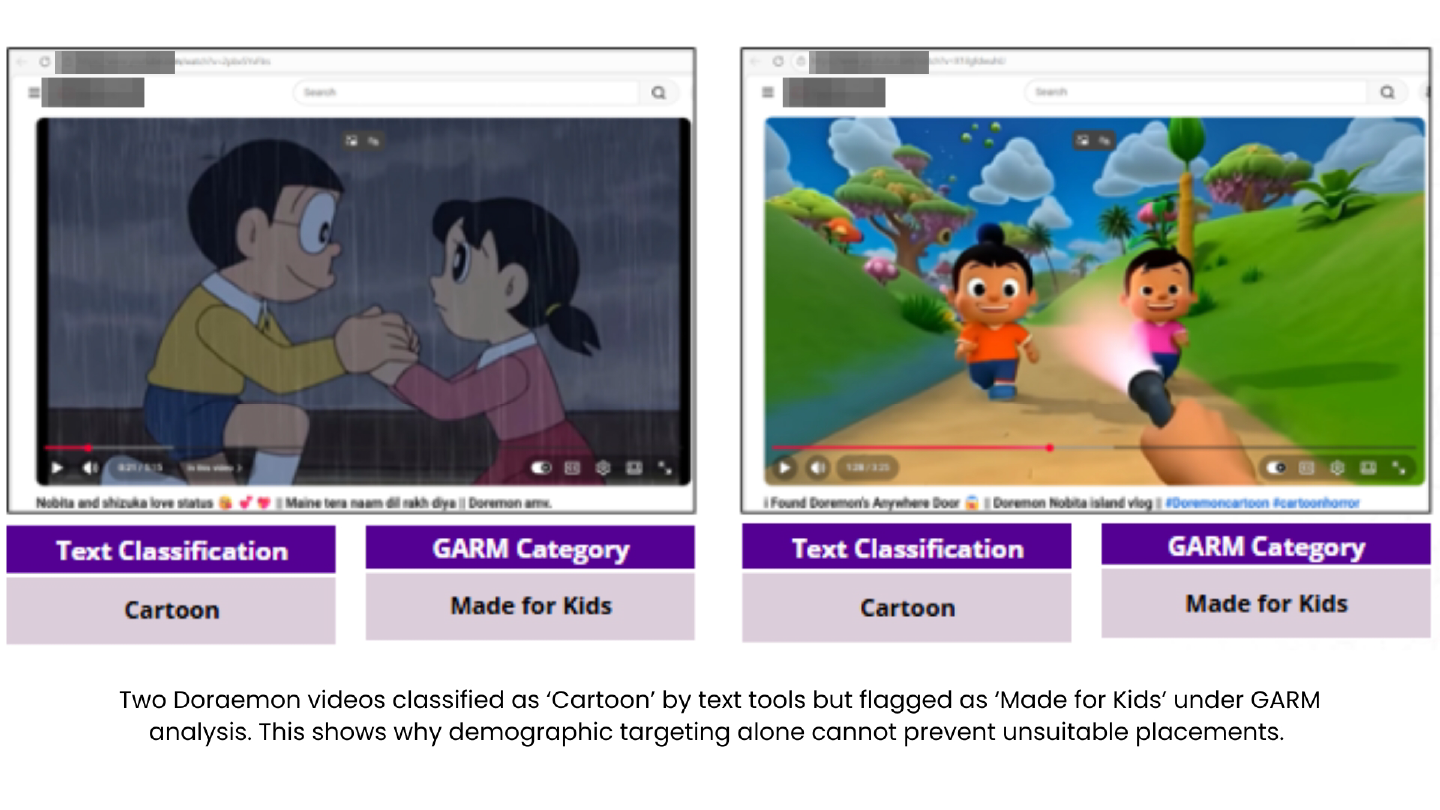

When an advertiser sets up a campaign targeting adults, say, 25 to 45 year olds, the platform uses data signals like age registered in Gmail accounts, browsing behavior, and declared gender to identifyand reach that profile.

The platform then delivers the ad to that account. Technically, it has done exactly what it was asked to do.

But here is what no one identifies; the platform has absolutely no way of knowing who is physically sitting in front of the screen at that moment. The account belongs to an adult; however, the viewer watching the screen at that moment could be a child. And this can only be prevented if the content is identified not just based on title and tags, but based on content, context, sentiment, as well as frame-level video analysis.

How mFilterIt’s Brand Safety Solution Solves Brand Suitability Issues?

mFilterIt’s brand suitability and brand safety solution, PACE, addresses both the content gap and the visibility gap that traditional tools leave behind. Here’s how it works and what it delivers for advertisers.

- Analyses videos frame by frame including visuals, audio, on-screen text, sentiment, and scene context to understand what a video actually contains, not just what it is labelled as.

- Classifies placements against GARM brand safety categories with risk levels as high, medium, and low, so exclusions are precise and not over-blocking safe inventory.

- Operates in real time, detecting and blocking unsuitable placements before your ad impression is served, not after the budget is already spent.

- Builds a curated whitelist of videos that are not just safe but genuinely suitable for your specific brand, audience, and campaign sentiment.

- Identifies regional and vernacular content with language-specific ML models built for the diverse market, where over a billion hours of regional content is consumed every month.

The result: Your ad runs in the right context, in front of the right audience, every time.

Conclusion

Brand safety keeps your ad out of harmful environments.

On the other hand, brand suitability makes sure it lands in the right one. Both matter. And right now, most advertisers are only solving either one or none.

So, before you take another campaign live, ask this: Is the content my ad is running next to reinforcing my brand or quietly working against it?

The brands that get this right aren’t just the ones with genuine results. They’re the ones who know, with precision and confidence, exactly where their ads are landing.

To learn more about how we can help, get in touch with our experts today.